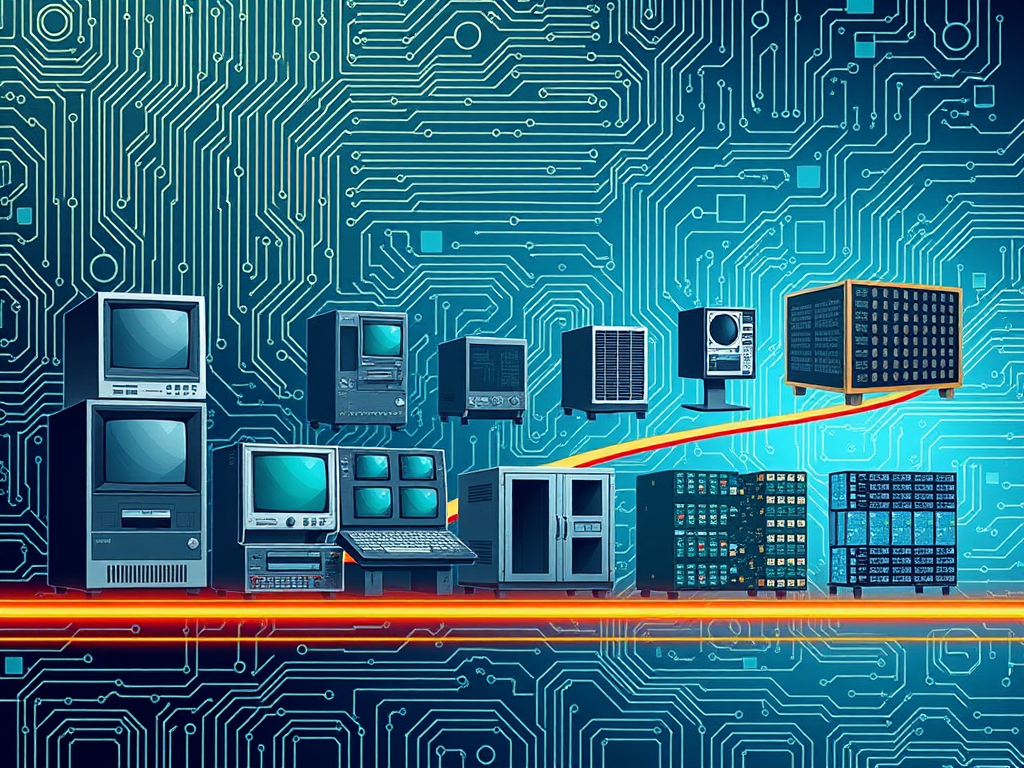

High-performance computing (HPC) has undergone a remarkable transformation since its inception, evolving from colossal mainframe systems to sophisticated clusters that drive today’s computational research and applications. This article explores the key milestones in the evolution of HPC, highlighting technological advancements and their impacts on various fields.

The Early Days: Mainframes

The journey of HPC began in the 1950s and 1960s with the advent of mainframe computers. These machines, such as the IBM 7030 Stretch and the CDC 6600, were groundbreaking in their ability to perform complex calculations at unprecedented speeds. Mainframes were primarily used by government agencies and large corporations for scientific research, data processing, and simulations.

Mainframes operated on a batch processing model, where jobs were queued and executed sequentially. This limited interactivity and real-time computation, but the sheer power of these machines laid the groundwork for future developments in HPC. The architecture of these early systems was characterized by large, centralized processing units and extensive memory, which allowed them to handle substantial datasets.

However, the high cost and complexity of mainframes restricted access to a select group of organizations. As a result, researchers sought ways to improve computing capabilities while making technology more widely available.

The Rise of Supercomputers

By the 1970s, the concept of supercomputing emerged, characterized by machines designed to perform at the highest levels of speed and efficiency. Notable examples include the Cray-1, introduced in 1976, which was the first to use vector processing to enhance performance. Supercomputers became essential tools in fields like weather forecasting, molecular modeling, and complex simulations.

The Cray-1, with its distinctive curved design and advanced cooling systems, was a game-changer, capable of executing over 80 million floating-point operations per second (MFLOPS). This era saw the emergence of specialized hardware designed specifically for scientific applications, further pushing the boundaries of what was possible in computational research.

Supercomputers were incredibly expensive and limited in availability, often found in dedicated research facilities or government laboratories. Their architecture was increasingly parallel, allowing for the simultaneous execution of multiple processes, which set the stage for future developments in HPC. The introduction of SIMD (Single Instruction, Multiple Data) architectures during this time was pivotal, as it allowed the same instruction to be executed across multiple data points simultaneously.

The Shift to Distributed Computing

The 1990s saw a significant shift with the rise of distributed computing. Advances in networking and the proliferation of personal computers allowed researchers to connect multiple machines, forming clusters that could work together on complex problems. This democratization of computing power made HPC more accessible to a broader range of institutions.

Cluster computing architectures utilized commodity hardware, significantly reducing costs while maintaining high performance. The use of Linux as an operating system for clusters further contributed to this trend, enabling easier management and scalability. This period also saw the development of parallel computing paradigms, such as MPI (Message Passing Interface), which enabled communication between nodes in a cluster.

With the advent of Beowulf clusters—essentially a collection of inexpensive, off-the-shelf computers linked together to work as a single system—researchers could harness the power of distributed systems without the prohibitive costs associated with traditional supercomputers. This shift opened new avenues for scientific research, allowing smaller institutions to engage in high-level computational work.

The Era of Multi-Core and GPU Computing

As the 2000s progressed, the focus shifted towards enhancing the performance of individual processors. The introduction of multi-core processors allowed for increased parallelism within a single chip, enabling more efficient computations. Manufacturers like Intel and AMD began producing CPUs that could handle multiple threads simultaneously, significantly boosting performance for both single-threaded and multi-threaded applications.

At the same time, the rise of Graphics Processing Units (GPUs) revolutionized HPC. Originally designed for rendering graphics, GPUs proved to be exceptionally well-suited for parallel processing tasks. Their architecture, which consists of thousands of smaller cores, allowed them to perform many calculations simultaneously, making them ideal for tasks like deep learning, simulations, and complex numerical computations.

The development of frameworks like CUDA (Compute Unified Device Architecture) by NVIDIA further facilitated the integration of GPU computing into HPC applications. Researchers began to explore the potential of GPUs for scientific computing, leading to substantial performance improvements in various fields, including biomedical research, climate modeling, and financial simulations.

Modern Clusters and the Cloud

Today, HPC is characterized by modern clusters that combine thousands of interconnected nodes. These systems leverage multi-core processors, GPUs, and specialized accelerators to deliver exceptional performance. The architecture of contemporary HPC systems often includes a mix of CPUs and GPUs, optimized for specific workloads.

Additionally, the rise of cloud computing has transformed the HPC landscape. Organizations can now access vast computing resources on-demand, eliminating the need for heavy upfront investments in hardware. Cloud-based HPC platforms, such as Amazon Web Services (AWS) and Microsoft Azure, provide scalability, flexibility, and ease of use, allowing researchers to focus on their work rather than infrastructure management.

The cloud has also facilitated collaboration across institutions and geographical boundaries. Researchers can share resources, datasets, and computational power, enabling more significant advancements in fields such as genomics, materials science, and artificial intelligence.

The Future of HPC

As we look to the future, several trends are shaping the evolution of HPC. Quantum computing, for instance, promises to revolutionize the field by solving problems that are currently intractable for classical computers. Quantum algorithms have the potential to outperform traditional algorithms in areas such as cryptography, optimization, and simulation of quantum systems.

Additionally, advancements in machine learning and artificial intelligence are driving the need for ever-increasing computational power. The integration of AI with HPC is paving the way for new methods in data analysis, predictive modeling, and even automated scientific discovery.

Sustainability is also becoming a critical consideration in HPC development. Efforts to improve energy efficiency and reduce the carbon footprint of data centers are gaining momentum as the demand for computing power continues to rise. Innovations in cooling technologies, energy-efficient processors, and green data centers are essential for meeting the challenges of sustainable computing.

Conclusion

The evolution of high-performance computing from mainframes to modern clusters highlights the dynamic nature of technology and its impact on research and industry. Each phase of this journey has brought about significant advancements in computational capabilities, making HPC more accessible and powerful.

As HPC continues to advance, it will undoubtedly play a pivotal role in addressing some of the world’s most significant challenges, from climate change to medical research. The integration of emerging technologies, coupled with a commitment to sustainability, will shape the future of HPC, ensuring that it remains an essential tool for discovery and innovation. The journey is far from over, and the future of HPC holds exciting possibilities, promising to unlock new frontiers in science and technology.